Is Terraform better than Helm for Kubernetes?

I have been an avid user of Terraform and use it to do many things in infrastructure, be it provisioning machines or setting up my whole stack. When I started working with Helm, my first impression was “Wow! This is like Terraform for Kubernetes resources!”. What re-kindled these thoughts again was when a few weeks ago Hashicorp announced support for Kubernetes provider via terraform. So one can use Terraform to provision their infrastructure as well as to manage Kubernetes resources. So I decided to take both for a test drive and see what works better in one vs. the other. Before we get to the meat, a quick recap of similarities and differences. For brevity in this blog post when I mention terraform, I am referring to the terraform kubernetes provider.

There are some key similarities:

* Both allow you to describe and maintain your Kubernetes objects as code. Helm uses the standard manifests along with Go-templates whereas terraform uses the json/hcl file format. * Both allow usage of variables and overwriting those variables at various levels such as file, command line and terradform additionally supports environment variables. * Both support modularity (Helm has sub-charts while Terraform has modules * Both provide a curated list of packages (Helm has stable and incubator charts, while terraform has recently started with Terraform Module Registry, though there are no terraform modules in the registry that work on Kubernetes yet as of writing) * Both allow installation from multiple sources such as local directories and gitrepositories. * Both allow dry-run of actions before actually running them (helm has a –dry-run flag, while terraform has plan subcommand)With this premise in mind, I set out to try and understand the differences between the two. I took a simple use case with following objectives:

* Install Kubernetes cluster (Possible with terraform only) * Install GuestBook App * Upgrade Guestbook App * Rollback the upgradeSetup: Provisioning Kubernetes Cluster

In this step we will create a kubernetes cluster via Terraform, follow along the steps listed below:

* Clone this git repo * Kubectl, terraform, ssh and helm binaries should be available in the shell you are working with. * Create a file calledterraform.tfvars with following content: <code>do_token = "YOUR_DigitaOcean_Access_Token"

# You will need a token for kubeadm and can be generated using following command:

# python -c 'import random; print "%0x.%0x" % (random.SystemRandom().getrandbits(3*8), random.SystemRandom().getrandbits(8*8))'

kubeadm_token = "TOKEN_FROM_ABOVE_PYTHON_COMMAND"

# private_key_file = "/c/users/harshal/id_rsa"

private_key_file = "PATH_TO_YOUR_PRIVATE_KEY_FILE"

# public_key_file = "/c/users/harshal/id_rsa.pub"

public_key_file = "PATH_TO_YOUR_PUBLIC_KEY_FILE"

</code>

Now we will run a set of commands to provision the cluster:

terraform get so terraform picks up all modules

terraform init so terraform will pull all required plugins

terraform plan to validate if everything shall run as expected.

terraform apply -target=module.do-k8s-cluster

This will create a 1-master-3-worker kubernetes cluster and copy a file called admin.conf to ${PWD} which can be used to interact with the cluster via kubectl. Run export KUBECONFIG=${PWD}/admin.conf to use this file for this session. You can also copy this file to ~/.kube/config if required. Ensure your cluster is ready by running kubectl get nodes. The output should show all nodes in Ready status.

<code>

NAME STATUS AGE VERSION

master Ready 4m v1.8.2

node1 Ready 2m v1.8.2

node2 Ready 2m v1.8.2

node3 Ready 2m v1.8.2

</code>

Terraform Kubernetes Provider

Install Guestbook

Once you run terraform apply -target=module.gb-app verify that all pods and services are created by running kubectl get all. You should now be able to access guestbook on node port 31080. You will notice that we have implemented Guestbook using Replication Controllers and not Deployments. That is because Kubernetes provider in terraform does not support beta resources. More discussion about this in this terraform-provider-kubernetes repo. Under the hood we are using simple declaration files and mainly rc.tf and services.tf files in gb-module directory should self-explanatory.

Update Guestbook

Since the application is deployed via Replication Controllers, changing the image is not enough, we would need to scale down old pods and scale up new pods. So we will scale down the RC to 0 pods and then scale it up again with new image. Run terraform apply -var 'fe_replicas=0' && terraform apply -var 'fe_image=harshals/gb-frontend:1.0' -var 'fe_replicas=3'. Verify Updated application at NodePort 31080.

Rollback the application

Again, without deployments, rollback of RC’s is a little more tedious. We scale down the RC to 0 and then bring back the old image. Run terraform apply -var 'fe_replicas=0' && terraform apply. This will bring the pods back to their default version and replica count.

Helm

Install Guestbook

Now we will perform the installation of Guestbook on the same cluster in a different namespace using helm. Ensure you are pointing to correct cluster by running export KUBECONFIG=${PWD}/admin.conf Since we are running Kubernetes 1.8.2 with RBAC, run following commands to give tiller required privileges and initialize helm:

<code>kubectl -n kube-system create sa tiller

kubectl create clusterrolebinding tiller --clusterrole cluster-admin --serviceaccount=kube-system:tiller

helm init --service-account tiller --upgrade

</code>

(Courtesy: https://gist.github.com/mgoodness/bd887830cd5d483446cc4cd3cb7db09d )

Run following command to install Guestbook on a namespace “helm-gb” :

helm install --name helm-gb-chart --namespace helm-gb ./helm_chart_guestbook

Verify all pods and services are created by running helm status helm-gb-chart

Upgrade Guestbook

In order to perform a similar upgrade via helm, run following command:

helm upgrade helm-gb-chart --set frontend.image=harshals/gb-frontend:1.0

Since the application is using Deployments, the upgrade is a lot easier. Run

helm status helm-gb-chart

to view the upgrade taking place and old pods being replaced with new ones.

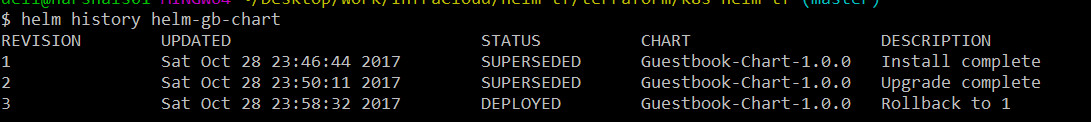

Rollback

Revision history of the chart can be viewed via helm history helm-gb-chart

Run following command to perform rollback:

helm rollback helm-gb-chart 1

Run helm history helm-gb-chart to get rollback confirmation as shown below:

[caption id=”attachment_450” align=”alignnone” width=”1091”] helm-rollback[/caption]

helm-rollback[/caption]

Cleanup

Ensure to cleanup the cluster by running terraform destroy

Pros and Cons

We already saw the similarities between helm and Terraform pertaining to the management of Kubernetes resources. Now let’s look at what works well and doesn’t with each of them:

Terraform Pros:

* Use of same tool and code base for infrastructure as well as cluster management including the Kubernetes resources. So a team already confortable with Terraform can easily extend it to be used with Kubernetes. * Terraform does not install any component inside the Kubernetes cluster whereas Helm installs tiller. This can be seen as a positive but tiller does some real-time management of running pods which we will talk in a bit.Terraform Cons:

* No support for beta resources. While many implementations are already working with beta resources such as Deployment, Daemonset, StatefulSet, not having these available via Terraform provider reduces the incentive for working with terraform. * In case of a scenario where there is a dependency between two providers ( Module do-k8s-cluster creates admin.conf and Kubernetes provider of module gb-app refers to it), a single terraform action will not work. The order of execution has to be maintained and run in the following formatterraform apply -target=module.do-k8s-cluster && terraform apply -target=gb-app. This becomes tedious and hard to manage when there are multiple modules which could have provider based dependencies. This is still an open issue within terraform, more details can be found in this Terraform repo here. * Terraform kubernetes provider is still fairly new. Helm Pros:

* Since helm makes API calls to the tiller, all kubernetes resources are supported. Moreover helm templates have advanced constructs such as flow control and pipelines resulting in a lot more flexible deployment template. * Upgrades and Rollbacks are very well implemented and easy to handle in Helm. Also, the running Tiller inside cluster managed the runtime resources effectively. * Helm charts repository has a lot of useful charts for various applications.Helm Cons:

* Helm becomes an additional tool to be maintained, apart from existing tools for Infrastructure creation and Configuration management.Conclusion

In terms of sheer capabilities, Helm is far mature as of today and makes it really simple for the end user to adopt an use it. A great variety of charts also give you a head start and you don’t have to re-invent the wheel. Helm’s tiller component provides a lot of capabilities at runtime which is not present in Terraform due to inherent nature of the way it is used. On other hand terraform can provision machines, clusters and seamlessly also manage resources making it a single tool to learn and manage all of your infrastructure. Though for managing the applications/resources inside Kubernetes cluster, you have to do a lot of work and lack of support for beta objects makes it all the more impossible. I would personally go about using Terraform for provisioning cloud resources and Kubernetes and Helm for deploying applications. Time will tell if terraform gets better at application/resources provisioning in Kubernetes cluster.

Looking for help with Kubernetes adoption or Day 2 operations? do check out how we’re helping startups & enterprises with our managed services for Kubernetes.

Stay updated with latest in AI and Cloud Native tech

We hate 😖 spam as much as you do! You're in a safe company.

Only delivering solid AI & cloud native content.